AI agents are often described as intelligent systems that can reason, plan, and act. But underneath all of those capabilities sits a fundamental limitation. Large language models do not remember. Every interaction with a model starts from a blank state. The model only knows what is present in the current context window. If the relevant information is not included in that context, the model cannot use it. This constraint is what makes memory a core architectural component of any serious AI agent system. Without memory, agents cannot accumulate knowledge, track past decisions, or maintain continuity across tasks.

Why Memory Exists in Agent Systems

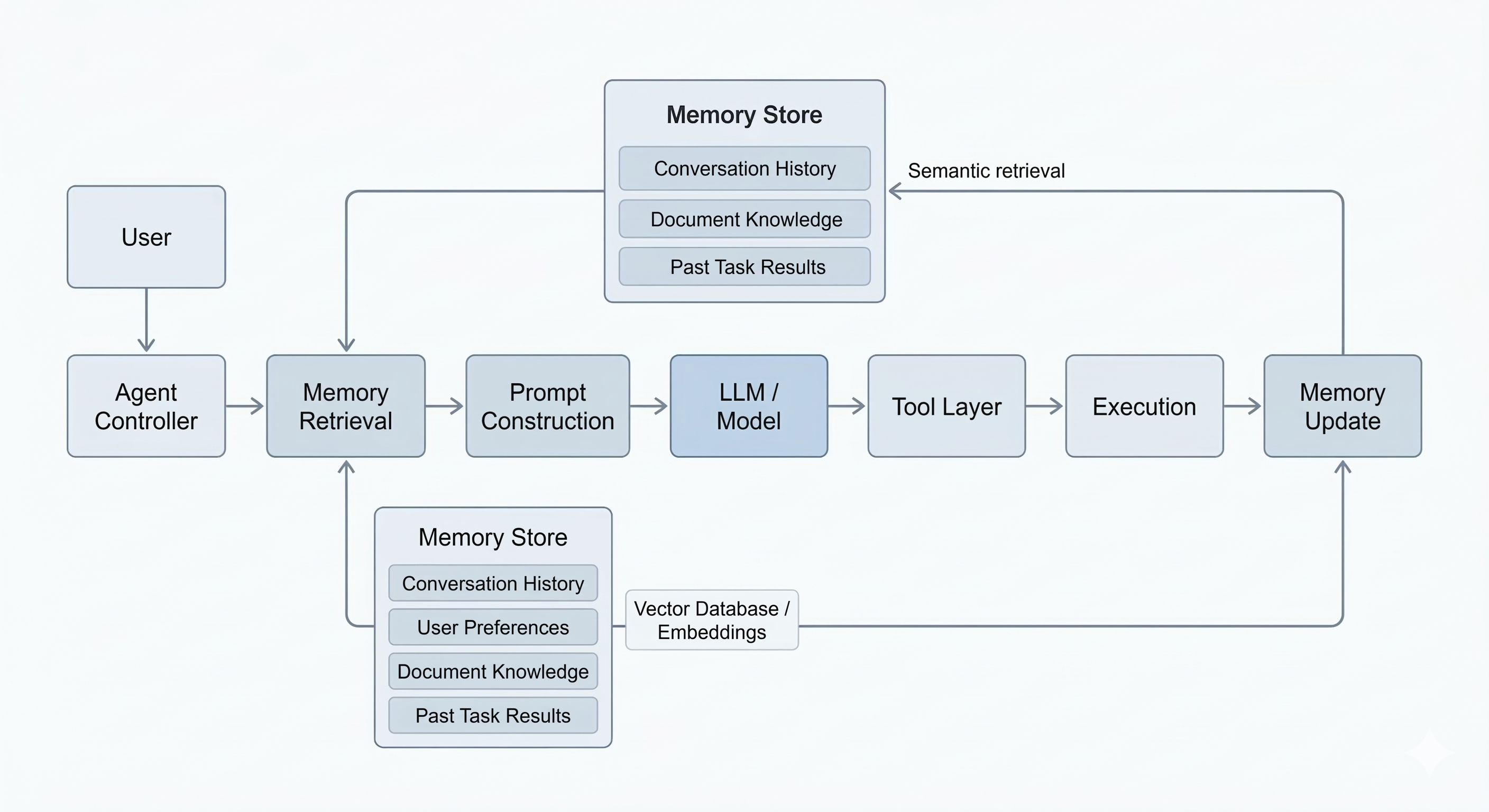

To understand why memory is necessary, it helps to look at what actually happens when a user interacts with an agent. A request moves through a pipeline that looks something like this:

User Input → Prompt Construction → Tokenization → Model Reasoning → Tool Execution → ResponseThe model processes tokens, generates an output, and then the interaction ends. There is no persistent state inside the model. If the user returns later and asks a follow-up question, the model has no awareness of the previous conversation unless that information is explicitly added back into the prompt. Memory systems exist to solve exactly this problem.

They allow an agent to store information outside the model and retrieve it when needed.

Memory Is an External System

One of the most important things to understand is that memory is not part of the model itself. Memory lives outside the model.

The agent architecture usually looks more like this:

User → Agent Controller → Memory Retrieval → Prompt Construction → Model → Tool Layer → ResponseBefore the model generates an answer, the system retrieves relevant information from memory and injects it into the prompt. This makes the model behave as if it remembers previous interactions. In reality, the memory system is doing the work.

Types of Memory in AI Agents

Most agent systems rely on two broad categories of memory.

Short-Term Memory

Short-term memory handles the immediate context of a conversation. Short-term memory usually exists inside the context window of the model. This includes things like:

- Previous messages in the conversation

- Recent tool outputs

- Temporary reasoning steps

Because this information is stored as tokens in the prompt, it is limited by the context window of the model. GPT latest class models may support context windows of tens or hundreds of thousands of tokens, but that capacity is still finite. Once the conversation grows too long, earlier information must be removed or compressed. This is why long conversations eventually lose context.

Long-Term Memory

Long-term memory exists outside the prompt. It is typically implemented using databases that store structured or semantic representations of past information. For example:

- Conversation summaries

- User preferences

- Knowledge extracted from documents

- Results from previous tasks

When the agent receives a new request, the system searches this stored information and retrieves the most relevant pieces. Those results are then inserted into the prompt before the model runs.

Vector Memory and Retrieval

Most modern agent memory systems rely on vector embeddings. An embedding converts text into a high-dimensional numerical representation that captures semantic meaning. Two pieces of text that discuss similar topics will produce embeddings that are close together in vector space. This allows the system to perform semantic search. The pipeline usually looks like this:

User Message → Embedding Model → Vector Database Search → Retrieve Relevant Memories → Inject Into Prompt → Model ReasoningInstead of matching exact keywords, the system retrieves information based on meaning. This makes the agent capable of recalling relevant knowledge even if the wording is different.

If you're enjoying this post, consider subscribing to get future articles delivered straight to your inbox.

SubscribeMemory Retrieval in the Agent Pipeline

When memory is integrated into an agent, the runtime flow typically looks like this:

User Request → Query Memory Store → Retrieve Relevant Context → Build Prompt → Model Generates Plan → Tools Execute → New Information Stored in MemoryEach interaction becomes both a read and a write operation. The agent retrieves information that might help answer the request, and it may store new knowledge generated during the task.

Over time, the system accumulates useful context.

Memory Is a Retrieval Problem

One of the most common misconceptions about AI agents is that memory is simply a storage system. In practice, storage is the easy part. The real challenge is retrieval. The system must determine:

- Which memories are relevant to the current task

- How much information should be retrieved

- How to avoid polluting the prompt with irrelevant context

Too little memory retrieval causes the agent to forget important details. Too much retrieval overwhelms the context window and reduces model performance. Designing effective retrieval pipelines is one of the hardest problems in agent architecture.

Memory Shapes Agent Behavior

Memory fundamentally changes what an AI agent can do. Without memory, agents behave like stateless functions. With memory, agents begin to accumulate experience.

They can learn user preferences, remember past research, and maintain continuity across complex tasks. Many of the most advanced agent systems rely heavily on memory layers that sit between the user and the model. These layers allow the system to continuously retrieve knowledge and feed it back into the reasoning process.

Where Memory Fits in the Agent Architecture

At this point in the series, the architecture of an agent system is starting to become clearer.

User → Prompt → Tokens → Model → Tool Layer → External SystemsMemory introduces a persistent knowledge layer that sits alongside the model.

User → Agent Controller → Memory Retrieval → Prompt Construction → Model → Tool Layer → Execution → Memory UpdateThe model performs reasoning, but the memory layer provides context. Together, they allow the agent to behave as a system that appears to remember.

What Comes Next

Memory allows agents to retain knowledge across tasks. But remembering information is only part of the problem. Agents also need to decide what to do next.

This introduces another critical component of agent systems: Planning. In the next article, we will look at how agents break complex requests into smaller steps and generate execution plans that coordinate tools, reasoning, and memory.