A key component of an AI agent system is the tool layer. Without tools, an agent is just a language model that can generate text. Tools are what allow the model to interact with the outside world. They let an agent query databases, call APIs, trigger workflows, or retrieve information from external systems. In most modern agent architectures, tools are exposed to the model through structured function interfaces. The model does not execute code directly. Instead, it decides which tool to call and produces a structured request that matches the tool's schema. Your application layer then executes that request, returns the result, and the model continues reasoning with the new information.

The Core Execution Loop

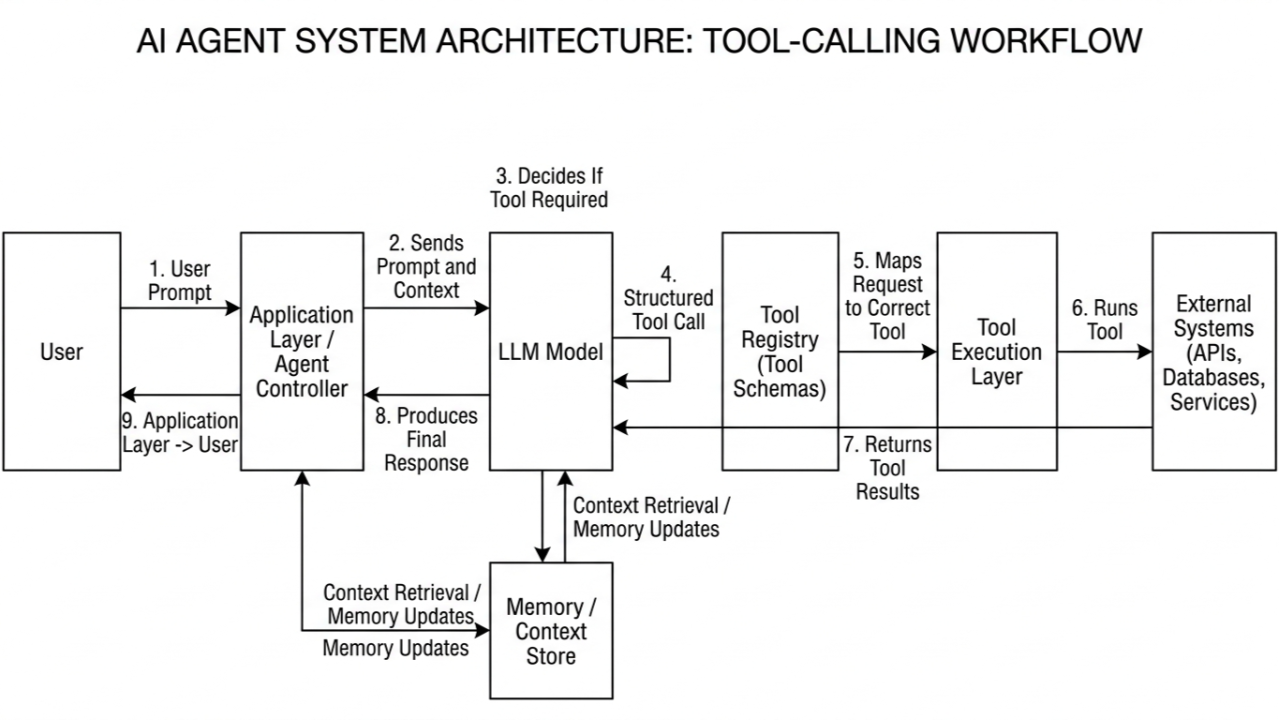

At a high level, the interaction follows a loop: A user request enters the system. The model interprets the request and decides whether a tool is required. If a tool is needed, the model produces a structured tool call. Your system executes the tool and returns the result to the model. The model uses that result to either produce a final response or trigger another action.

This loop is the core execution pattern behind most agent systems today. The model itself does not know how your backend works. It only sees the tool descriptions and parameter schemas that you provide. This makes tool design an important part of agent engineering.

Tool Schema Design

A vague tool description leads to vague model behavior. If two tools overlap in capability, the model may choose the wrong one. If parameters are poorly described, the model may hallucinate values or call the tool incorrectly. In many production systems, there is also a routing or validation step before execution to catch bad calls early. A good tool schema should clearly describe three things:

- What the tool does

- When it should be used

- What parameters it expects

The description should also explain when the tool should not be used. This helps reduce ambiguous decisions inside the model. For example, if an agent has both a search_products tool and a get_product_details tool, the descriptions should make their roles explicit. One retrieves product IDs from a search query. The other retrieves structured data for a specific product ID. Without this distinction, the model may call the wrong tool or repeatedly attempt the same call.

Handling Loops and Retries

Another common issue is repeated or unnecessary tool usage. If a model receives incomplete information, it may attempt multiple tool calls while trying to reason its way toward a solution. In some cases this creates loops where the agent keeps calling the same tool without making progress. Guarding against this requires limits on retries, parameter validation, and sometimes a supervisory layer that checks whether the model's action makes sense before executing it.

Execution Order

If you're enjoying this post, consider subscribing to get future articles delivered straight to your inbox.

SubscribeExecution order is another design consideration. Some tool calls must happen sequentially because one step depends on the output of another. For example, a financial agent might first retrieve a user's portfolio before calculating exposure or risk metrics. Other cases allow parallel execution. If an agent needs weather data and currency exchange rates, both queries can be executed at the same time. Parallelizing independent tool calls can significantly reduce latency in production systems.

Observability

Observability becomes critical once agents start interacting with multiple tools. When something goes wrong, you need to know which tool was selected, what parameters were passed, and how the model interpreted the results. Logging every tool invocation is essential. Tracing frameworks can also help reconstruct the execution path during debugging. Many teams integrate agent traces with their existing monitoring stack so tool usage, latency, and error rates can be analyzed alongside other system metrics.

Validation

Another important aspect is validation. Tool outputs should not be blindly trusted by the model or by downstream systems. Structured validation layers can verify that tool responses are complete and match expected schemas before feeding them back into the model's context. This prevents cascading failures where one bad tool result corrupts the entire reasoning chain.

The Tool Layer as Integration Point

As agent systems grow more complex, the tool layer often becomes the main integration point between the model and the rest of the software stack. Databases, internal APIs, payment systems, search services, and analytics pipelines can all appear as tools from the model's perspective. The agent becomes a planner that decides which capabilities to invoke, while the underlying infrastructure continues to handle the actual execution.

In practice, most production challenges with agents do not come from the model itself. They come from how the model interacts with tools and external systems. Designing clear tool schemas, validating inputs and outputs, and monitoring execution paths are what turn a prototype agent into a reliable system.